Mideast & Africa News

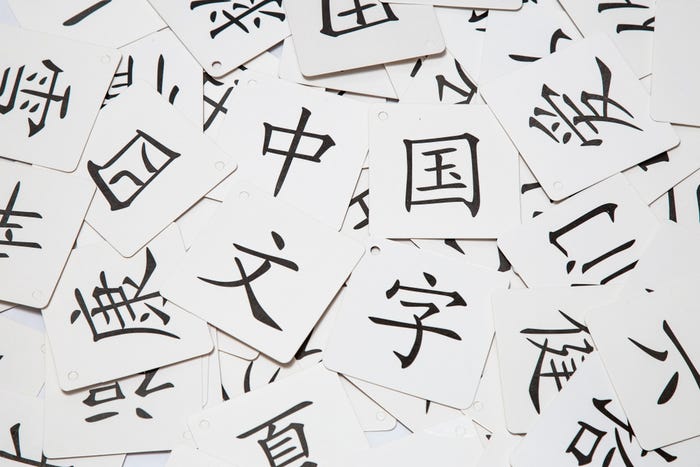

Chinese Keyboard Apps Open 1B People to Eavesdropping

Chinese Keyboard Apps Open 1B People to Eavesdropping

Eight out of nine apps that people use to input Chinese characters into mobile devices have weakness that allow a passive eavesdropper to collect keystroke data.

Latest Commentary

Cybersecurity GRC Manager, Universal Logistics Holdings, Inc.

Vice President of Industrial Security, Waterfall Security Solutions

Chief Innovation Officer, Venafi

Founder & CEO, KnowBe4, Inc.

Chief Security Officer, CrowdStrike

Deep Reading

See More Dark Reading ResearchDark Reading talks cloud security with John Kindervag, the godfather of zero trust.

Airbnb's Allyn Stott introduces maturity model inspired by the Hunting Maturity Model (HMM) to complement MITRE ATT&CK to improve security metrics analysis.

Caller ID spoofing and AI voice deepfakes are supercharging phone scams. Fortunately, we have tools that help organizations and people protect themselves against the devious combination.

Cybersecurity Features In-Depth: On security strategy, latest trends, and people to know. Brought to you by Mandiant.

Security Technology: Featuring news, news analysis, and commentary on the latest technology trends.

Just like you should check the quality of the ingredients before you make a meal, it's critical to ensure the integrity of AI training data.

The tech giant tosses together a word salad of today's business drivers — AI, cloud-native, digital twins — and describes a comprehensive security strategy for the future, but can the company build the promised platform?

Modern networks teem with machine accounts tasked with simple automated tasks yet given too many privileges and left unmonitored. Resolve that situation and you close an attack vector.

The payment card industry pushes for more security in financial transactions to help combat increasing fraud in the region.

Eight out of nine apps that people use to input Chinese characters into mobile devices have weakness that allow a passive eavesdropper to collect keystroke data.

Lazarus, Kimsuky, and Andariel all got in on the action, stealing "important" data from firms responsible for defending their southern neighbors (from them).

Breaking cybersecurity news, news analysis, commentary, and other content from around the world.

Partner Perspectives

More Partner PerspectivesPress Releases

See allBlack Hat USA - August 3-8 - Learn More

August 3, 2024Cybersecurity's Hottest New Technologies: What You Need To Know

March 21, 2024

Beyond Spam Filters and Firewalls: Preventing Business Email Compromises in the Modern Enterprise

April 30, 2024Key Findings from the State of AppSec Report 2024

May 7, 2024Is AI Identifying Threats to Your Network?

May 14, 2024Where and Why Threat Intelligence Makes Sense for Your Enterprise Security Strategy

May 15, 2024Safeguarding Political Campaigns: Defending Against Mass Phishing Attacks

May 16, 2024

.jpg?width=100&auto=webp&quality=80&disable=upscale)

.png?width=150&auto=webp&quality=80&disable=upscale)