Technology, Regulations Can't Save Orgs From Deepfake Harm

Monetary losses, reputational damage, share price declines — it's hard to counter, much less try to stay ahead of, AI-based attacks.

June 6, 2024

With a lack of technologies and regulations to blunt the impact of fake audio, images, and video created by deep-learning neural networks and generative AI systems, deepfakes could serve up some costly shocks to businesses in the coming year, experts say.

Currently, deepfakes top the list of concerning cyber threats, with a third of companies considering deepfakes to be a critical or major threat. Some 61% of companies have experienced an increase in attacks using deepfakes in the past year, according to a report released this week by Deep Instinct, a threat-prevention firm. However, attackers likely will only innovate and better adapt deepfakes to improve upon current fraud strategies, using generative AI to create attacks against financial institutions' know-your-customer (KYC) measures, manipulate stock markets with reputational attacks against specific publicly traded firms, and blackmail executives and board members with fake — but still embarrassing — content.

In the short term, the impact of a deepfake campaign aiming to undermine the reputation of a company could be so great that it affects the firm's general creditworthiness, says Abhi Srivastava, associate vice president of digital economy at Moody’s Ratings, a financial information firm.

"Deepfakes have potential for substantial and broad-based harm to corporations," he says. "Financial frauds are one of the most immediate threats. Such deepfake-based frauds are credit negative for firms because they expose them to possible business disruptions and reputational damage and can weaken profitability if the losses are high."

Deepfakes have already become a tool for attackers behind business-leader impersonation fraud — in the past referred to as business email compromise (BEC) — where AI-generated audio and video of a corporate executive are used to fool lower-level employees into transferring money or taking other sensitive actions. In an incident disclosed in February, for example, a Hong Kong-based employee of a multinational corporation transferred about $25.5 million after attackers used deepfakes during a conference call to instruct the worker to make the transfers.

You Ain't Seen Nothing Yet

With financial institutions rarely meeting their own customers, company employees increasingly working remotely, and deepfake technology becoming easier to use, the number of attacks will only increase and become more effective, says Carl Froggett, CIO at Deep Instinct and the former head of global infrastructure defense at financial firm Citi.

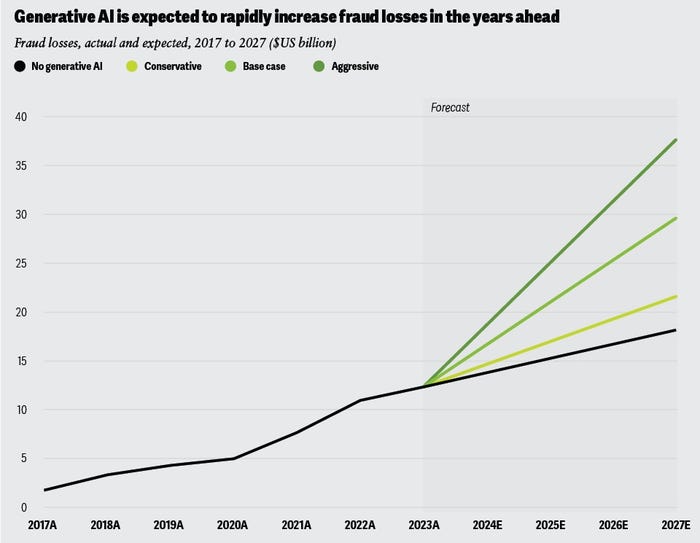

Generative AI has supercharged fraud losses, leading predicted losses to double by 2027. Source: Deloitte Insights

Overall, three-quarters of companies saw an increase in deepfakes impersonating a C-level executive, according to the "Voice of SecOps" report published by Deep Instinct.

"Key individuals, CEOs, board members, they're going to be the targets especially for individual and deepfake kind of reputational damage, so we can no longer cut them some slack ... and let them bypass a security measure," Froggett says. "We don't really have a good technical answer for them at this point, and they're just going to get worse, so identity and phishing-resistant technology are going to be really important."

Financial institutions will feel the most pain. Financial fraud losses are set to accelerate due to generative AI, with consultancy Deloitte forecasting that losses for the banking industry could reach $40 billion by 2027, double the predicted losses prior to the advent of generative AI.

Fake CEOs, Real Losses

Deepfakes have also arguably had an impact on stock market prices. A year ago, a picture of an explosion at the Pentagon shared through a verified Twitter account and propagated by multiple news agencies caused the S&P 500 to shed 1% of its value in minutes, before traders discovered it was likely AI-generated. In April, India's National Stock Exchange (NSE) had to issue a warning to investors after a deepfake video appeared of the NSE's CEO recommending specific stocks.

However, a deepfake of a CEO recommending a stock or releasing misinformation is unlikely to trigger the US Securities and Exchange Commission's material disclosure rule, says James Turgal, vice president of global cyber-risk and board relations at Optiv, a cyber advisory firm.

"The threshold for an SEC cyber disclosure would be difficult based upon a deepfake video or voice impersonation, as there would have to be proof, from the shareholders’ point of view, that the deepfake attack caused a material impact on a corporation's information technology system," he says.

While companies that do not stem deepfake fraud's impact on their own operations may face a credit penalty, the regulatory picture is still blurry, says Moody's Srivastava.

"If deepfakes grow in scale and frequency and turbocharge cyberattacks, the same extant regulatory implications that apply to cyberattacks can also apply to it," he says. "However, when it comes to deepfakes as a standalone threat, it appears that most jurisdictions are still deliberating whether to enact new legislation or if existing laws are sufficient, with most of the focus being election-related and adult deepfakes."

Is Technology a Solution or the Problem?

Unfortunately, the technological picture around deepfakes still favors the attacker, says Optiv's Turgal.

So, while searching for technical solutions, many companies are reinforcing processes designed to create additional checks that can stop deepfake scams, requiring verbal authentication by senior leaders for monetary transactions over a certain amount, and code authentication sent to a trusted electronic device, he says. Some companies are even moving away from technology and embracing person-to-person interaction as the final check.

"As the deepfake technology threat grows, I see a move back by some companies to good old-fashioned person-to-person interaction to create a low-tech two-factor authentication solution to mitigate the high-tech threat," Turgal says.

Creating trusted channels of communication should be a priority for all companies, and not just for sensitive processes — such as initiating a payment or transfer — but also for communications to the public, says Deep Instinct's Froggett.

"The best companies are already preparing, trying to think of the eventualities. ... You need legal, regulatory, and compliance groups — obviously, marketing and communication — to be able to mobilize to combat any misinformation," he says. "You're already seeing that more mature financials have that in place and are practicing it as part of their DNA."

Other industries, Froggett adds, will have to evolve similar capabilities as well.

About the Author

You May Also Like