New OWASP Top 10 Reveals Critical Weakness in Application Defenses

It's time to move from a dependence on the flawed process of vulnerability identification and remediation to a two-pronged approach that also protects organizations from attacks.

When I wrote the first OWASP Top 10 list in 2002, the application security industry was shrouded in darkness. The insight that a few other engineers and I had gained through hand-to-hand combat with a wide variety of applications lived only within us. We recognized that for the industry to have a future, we had to make our knowledge public.

My idea was that we needed a top 10-style guide to help organizations focus on the key risks. I was also trying to establish a “standard of care” that would potentially allow a negligence regime to take hold and move the software industry in the right direction. I thought we were establishing a floor, and that organizations would move quickly to stamp out many of these risks.

But it hasn’t happened. Sometimes when you try to create a floor, you accidentally create a ceiling. Many organizations have an application security program that works on a small part of their application portfolio (typically just the “critical” applications) and doesn’t cover even the Top Ten. Unfortunately, this approach leads to exactly what we see in the market: huge numbers of vulnerabilities and increasingly serious breaches.

Frankly, I don’t like “top ten” lists at all. We aren’t improving at application security. If anything we’re getting worse. The days of PDF reports, gates, and development roadblocks are over. We need to take responsibility for building defenses, creating assurance, and blocking attacks. We can’t stand by and point fingers at vulnerabilities any more. There’s too much at stake.

2017 OWASP Top 10

The Top Ten Project has grown and matured dramatically since 2002. In May of 2016, the OWASP Top Ten Project issued an open data call to gather statistics on what organizations are seeing in terms of application security risks. A variety of organizations, consultants, and vendors contributed data on over 50,000 applications. Within these applications, over 2.3 million vulnerabilities were identified. Like everything at OWASP, the project and data are free and open.

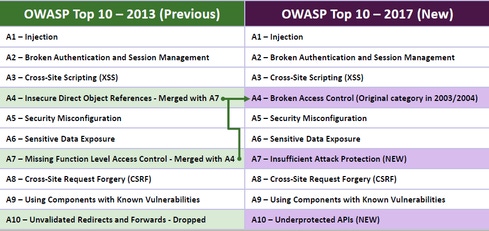

The Top Ten project is struggling to keep up with a changing software world. So in addition to data, the project always considers “future looking” concerns. Two of these items were added to the new Top Ten, and there is controversy over them in the community. Here’s a comparison of the old and new lists.

The New A7: "Insufficient Attack Protection"

One major addition to the new Top Ten is “Insufficient Attack Protection.” Traditional security defenses are like locks, they stop attackers, but have no way to detect or report that they’re being attacked. And applications have no way to quickly and easily patch themselves to respond to an attack. Given how difficult it is to prevent vulnerabilities from getting into our code, we simply can’t rely solely on trying to achieve perfect code. Currently, attackers can attack with impunity, never getting detected or blocked. Eventually, that’s a recipe for disaster.

The idea that insufficient attack protection belongs in this list isn’t obvious. Is it a risk not to have a smoke alarm? A burglar alarm? A security guard? It depends on your other defenses and where you think security ought to be. Just because you try to write code without buffer overflows doesn’t mean that you don’t need ASLR. And just because you think your authentication is secure doesn’t mean you shouldn’t detect credential stuffing and implement account lockouts. Should we report the lack of these attack protections as risks? Some of these are already being reported, so the new Top Ten is really just acknowledging that the lack of attack detection, protection, and security patching actually is a risk.

As the Top Ten Project Lead, Dave Wichers writes on the project mailing list,

“As an industry, we need to evolve to keep up with both modern development practices and the increased sophistication of attackers. As Dev and Ops come together, we need developer security and operational security to come together as well. A7 is a step towards thinking of appsec as part of both Dev and Ops. We have to do a better job of advocating security for both Dev and Ops and choosing defense strategies that span the entire playing field. I think we all agree we should know where and how we are being attacked, and we should probably do something about it if we are. Help us figure out what we can recommend to help organizations get better at this.”

This is the idea. It is controversial, but seems worth exploring. Could we move from relying on the flawed process of vulnerability identification and remediation to a strategy that relies on both eliminating vulnerabilities and attack protection?

The new A10: "Underprotected APIs"

Another major addition is “Underprotected APIs.” The use of APIs has exploded in modern software. Even browser web applications are often written in JavaScript and use APIs to get data. There is a huge variety of protocols and data formats used by these APIs, including SOAP/XML, REST/JSON, RPC, GWT, and many more. But more importantly, these APIs are often unprotected and contain numerous vulnerabilities. Both traditional security tools and manual penetration testing have struggled to analyze APIs because the protocols and frameworks are so complex, so API security is often overlooked.

This new item overlaps with many of the existing Top Ten. But it’s also a huge gap that many organizations and application security tools don’t yet cover. The Top Ten project takes a pragmatic view here, trying to encourage organizations to ensure their API coverage. In the words of the release candidate:

“NOTE: The T10 is organized around major risk areas, and they are not intended to be airtight, non-overlapping, or a strict taxonomy. Some of them are organized around the attacker, some the vulnerability, some the defense, and some the asset. Organizations should consider establishing initiatives to stamp out these issues.”

Looking Ahead

To me, the 2017 Top 10 reflects the move towards modern, high-speed software development that we’ve seen explode across the industry since the last version of the Top 10 in 2013. While many of the vulnerabilities remain the same, the addition of APIs and attack protection should focus organizations on the key issues for modern software. I absolutely think it’s worth shaking up the list to get organizations to think about these important topics.

For all the advances we’ve made at OWASP, application security isn’t part of every software project; it’s not taught regularly in university; and software projects often don’t account for it either. Simply dividing the total vulnerabilities by the number of applications yields 45.8 vulnerabilities per application. But by doing an average of averages, and eliminating the lowest and highest vendors to prevent outliers, the average is 20.5 vulnerabilities per application. That’s a stunning number that demonstrates just how widespread these vulnerabilities are. If we found 1 in 20 applications had one of the Top Ten items, it would still be concerning, but we are in “crazy risk” territory now.

It’s unrealistic for anyone to expect a simple awareness document to change much. Still, even after 14 years the OWASP Top Ten is still a good way for organizations to start getting their head around the most critical issues in application security.

If you feel strongly about these issues, please join in and get involved. The Top Ten Project appreciates your constructive feedback and needs your help. This release candidate is being updated to address a lot of the feedback already received. Given the mixed reaction of many in the security community, we’ve scheduled an open session at the OWASP Summit. Further, we’re planning to create a task force with a series of online working meetings. If the conclusion is that these new items don’t make sense, let’s get something better figured out -- together.

[Read an opposing view from Chris Eng in OWASP Top 10 Update: Is it Helping to Create More Secure Applications?]

Related Content:

About the Author(s)

You May Also Like

Beyond Spam Filters and Firewalls: Preventing Business Email Compromises in the Modern Enterprise

April 30, 2024Key Findings from the State of AppSec Report 2024

May 7, 2024Is AI Identifying Threats to Your Network?

May 14, 2024Where and Why Threat Intelligence Makes Sense for Your Enterprise Security Strategy

May 15, 2024Safeguarding Political Campaigns: Defending Against Mass Phishing Attacks

May 16, 2024

Black Hat USA - August 3-8 - Learn More

August 3, 2024Cybersecurity's Hottest New Technologies: What You Need To Know

March 21, 2024