Malware Exploits Security Teams' Greatest Weakness: Poor Relationships With Employees

Users' distrust of corporate security teams is exposing businesses to unnecessary vulnerabilities.

In early January, Colin McMillen, the lead developer at SemiColin Games, tweeted a warning about a popular Google Chrome extension, The Great Suspender. The utility came under fire after McMillen learned the developer sold it to a third party that silently released a version that could spy on a user's browsing habits, inject ads into websites, or even download sensitive data.

After a community outcry, the new owner removed the offending code. Now aware of the change of ownership and breach of trust, many savvy users removed the extension.

Even so, The Great Suspender remained available in the Chrome Web Store until Feb. 3, when Google finally pulled the plug. Many of the extension's 2 million users found out when they received a warning that simply stated, "This extension may be dangerous. The Great Suspender has been disabled because it contains malware."

While Google eventually set things right, it took too long. McMillen's tweet shone a bright light on this in January, but comments on the extension's issue tracker indicate users reported the problem to Google as early as October 2020. This left Chrome users in a potentially vulnerable position for over three months.

How Personal Computers Put Work Devices at Risk

Sometimes, Google Chrome extensions installed on personal computers are automatically installed and synchronized to work devices. This brings their problems into the security team's purview, which then must make difficult decisions because:

The risks associated with running suspicious extensions like The Great Suspender usually impact the employee, not the company, more.

Before the extension was banned in February, end users had no official indication the extension was potentially malicious.

Despite the risks associated with the extension, users intentionally installed it and, presumably, were happily using it.

Security teams are accustomed to wielding impressive tools that can block, contain, and remediate clear threats. They work best in a world of absolutes, where software is either good or bad, and systems are either secure or vulnerable. In the case of The Great Suspender, a situation with many shades of gray, your average security team cannot take immediate action without backlash from users. Instead, these teams must carefully reach out to the impacted employee individually and guide them toward making prudent decisions on their own. It's a muscle rarely exercised, and an activity ill-suited to the tools at hand.

Individual Intervention Opens Questions About Surveillance

Teams that are willing to brave this task manually will find a high mountain to climb. Approaching an employee about this forces all sorts of uncomfortable topics front and center. Inquisitive users may now be curious about the scope and veracity of the company's monitoring. Now that they are working from home and surrounded by family, they wonder where is the line drawn with collecting personal data, and is there an audit log for the surveillance? For many teams, the benefits of helping end users are not worth the risk of toppling over the already wobbly apple cart. So extensions like The Great Suspender linger, waiting for the right moment to siphon data, redirect users to malicious websites, or worse.

This seems like a significant weakness in how IT and security teams operate. Because too few security teams have solid relationships built on trust with end users, malware authors can exploit this reticence, become entrenched, and do some real damage.

How to Solve These Problems

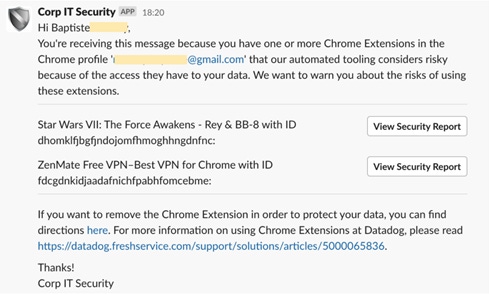

Forward-looking teams are rolling up their sleeves and developing tools to solve this. Dan Jacobson, who leads corporate IT at Datadog, told me about a tool his team built to handle this type of conundrum. They created a Slack app that private messages end users about risky Chrome extensions discovered by management software, with step-by-step instructions on removing them. "Transparency has been a core tenant of what we do here, so providing this service to our employees was a no-brainer," Jacobson says.

Netflix is also doing its part in this space. Not only did its security team provide crucial feedback for my Honest Security guide, but it's also made some of its user-focused tools open source. This includes Stethoscope, which Netflix uses internally to bridge communications gaps between the security team and end users.

Source: Kolide

In an interview, Jesse Kriss, a key member of Netflix's user-focused security engineering team, said, "Netflix has a culture of transparency and values freedom and responsibility. These principles don't align very well with the typical systems-management approach. Invisible software with admin rights pushing out centrally managed policies works against that ideal, in addition to having fairly significant security risks if the admin controls are compromised. ... Given all of these things, we prefer to have a model where we can check machine configuration at access time, give people clear guidance on how to make simple changes themselves, and not rely on strict inventory or a trusted bootstrapping process. Stethoscope gives us that ability."

No Time to Wait

Despite heroic efforts of forward-looking teams, Google cannot be let off the hook. Its reaction to The Great Suspender was too slow and opaque, and it left users without answers to basic questions anyone would ask when told they are running a malicious extension.

While we can hope for vendors like Google to do better, security teams cannot wait for the next incident to establish relationships to tackle problems posed by suspect extensions. They need to start investing in relationships with end users and methods to communicate with them at scale. This means doing the hard work of defining rules of engagement that respect users' privacy but also protect the company's interests. It also means rolling back surveillance tools that damage the trust underlying any positive relationship. The time investment may be steep but, given that the next threat might be near, the dividends are invaluable.

About the Author(s)

You May Also Like

Beyond Spam Filters and Firewalls: Preventing Business Email Compromises in the Modern Enterprise

April 30, 2024Key Findings from the State of AppSec Report 2024

May 7, 2024Is AI Identifying Threats to Your Network?

May 14, 2024Where and Why Threat Intelligence Makes Sense for Your Enterprise Security Strategy

May 15, 2024Safeguarding Political Campaigns: Defending Against Mass Phishing Attacks

May 16, 2024

Black Hat USA - August 3-8 - Learn More

August 3, 2024Cybersecurity's Hottest New Technologies: What You Need To Know

March 21, 2024