Frazzled business woman with smartphone and papers at desk

Cybersecurity Operations

CISO Corner: Breaking Staff Burnout, GPT-4 Exploits, Rebalancing NISTCISO Corner: Breaking Staff Burnout, GPT-4 Exploits, Rebalancing NIST

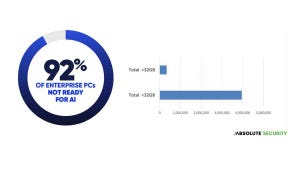

SecOps highlights this week include the executive role in "cyber readiness;" Cisco's Hypershield promise; and Middle East cyber ops heat up.

Keep up with the latest cybersecurity threats, newly discovered vulnerabilities, data breach information, and emerging trends. Delivered daily or weekly right to your email inbox.

.jpg?width=100&auto=webp&quality=80&disable=upscale)