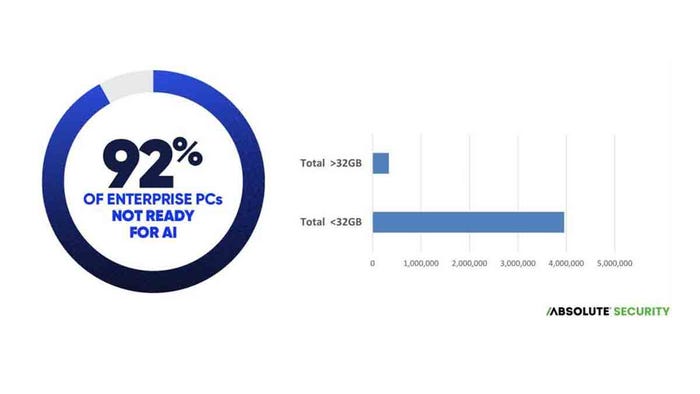

Chart showing how few devices are "AI-ready"

Endpoint Security

Enterprise Endpoints Aren't Ready for AIEnterprise Endpoints Aren't Ready for AI

Enterprises need to think about the impact on security budgets and resources as they adopt new AI-based applications.

Keep up with the latest cybersecurity threats, newly discovered vulnerabilities, data breach information, and emerging trends. Delivered daily or weekly right to your email inbox.

.jpg?width=100&auto=webp&quality=80&disable=upscale)