There are many defensive patterns in nature that also apply to information security. Here's how to defeat your predators in the high-stakes game of corporate survival and resiliency.

People say the Internet is a hostile network (which is true), and that got me thinking about other hostile environments, where a successful strategy results in resiliency and continuity. What if Mother Nature were the CISO? What would her strategy be? What capabilities could she give the prey species, so they could survive in the presence of many predators?

To get a better understanding of the defensive tactics of prey species, it is worth spending a minute talking about the dominant strategies of predators. The three that I'll highlight are cruising, ambush, and the blend of these, which I'll call cruising-ambush. All of these offer similarities to the threat landscape we have been experiencing on the Internet.

Cruising: This is where the predator is continually on the move to locate prey. It's a pattern we can see reflected when the adversary broadly scans the Internet for targets. These targets are stationary in the sense that, once a target is found, a connection can be made repeatedly.

Ambush: Here the predator sits and waits. This strategy relies on the prey's mobility to initiate encounters. On the Internet today, we see this ambush pattern in a compromised web server sitting and waiting for prey to connect and pull down the exploits. The majority of malware is distributed in this ambush pattern.

Cruising-ambush: The blended cruising-ambush is by far the most effective predator pattern. The idea is to minimize exposure when cruising and employ effective ambush resources, which, in turn, cruise and create a loop in the pattern. A few threats exhibit this, such as a phishing campaign that broadly cruises for prey. Once the victim clicks on the phishing link, it quickly shifts to the ambush pattern, with a compromised web server sitting and waiting for the connection to download the malware.

Patterns of prey

There are many documented defensive patterns for prey species, and I'd like to explore the ones that can be applied to Internet security. In all of these cases, Mother Nature's common pattern is making the prey marginally too expensive for the predator to identify and/or pursue.

Certain prey species have raised the cost of observation and orientation so much that they are operating outside their predators' perceptive boundaries. Camouflage is one technique, and another is having parts of the organism be expendable, as in a gecko's tail or a few bees in the colony. Camouflaging can be accomplished in Internet security through cryptography or in the random addressing within a massively large space like IPv6. For the latter, where parts are expendable, one can imagine a front-end system where there are 100 servers behind an application delivery controller (ADC).

Another effective countermeasure to cruising found in nature is the dispersion of targets or the frequent changing of nonstationary targets. This raises the observation and orientation requirements of the predator. If the predator has to do more probing and searching in the reconnaissance phase, it becomes more easily detected.

The last prey species pattern I find useful is one of tolerance to loss. Some species have found a way to divert the predator to eat the non-essential parts and have an enhanced ability to recover rapidly from the damage. Likewise, subsystems should be able to fail, and this failure information be used as inputs to the system for recovery processes.

Species resilience

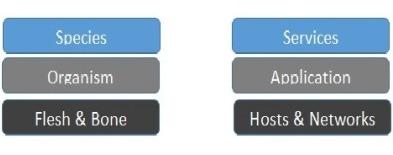

The game of survival and resiliency is at the level of species and not at the level of organism. Diversity, redundancy, and a high rate of change at the organism level provide stability at the species level. When we look at this pattern in information technology, we can quickly see the need for abstractions. For example, a web server farm of 10 servers (10 organisms) sits behind a load balancer that offers a service (the species).

Abstractions are available to us in our design of these systems, and we need to leverage them in the same way Mother Nature has over the past 3.8 billion years. Virtual servers, software-defined networking, virtual storage -- all the parts are at our disposal to design highly resilient species (services).

Prey species have found a way to establish a knowledge margin with their environment, and this is what we must do with our information systems. The systems you protect must continuously change based on two drivers: how long you think it will take your adversary to perform its reconnaissance and the detection of the adversary's presence. Each time your systems change, the cost for your adversary to infiltrate and, most importantly, to remain hidden is raised substantially, and this is the dominant strategy found in nature.

About the Author(s)

You May Also Like

The fuel in the new AI race: Data

April 23, 2024Securing Code in the Age of AI

April 24, 2024Beyond Spam Filters and Firewalls: Preventing Business Email Compromises in the Modern Enterprise

April 30, 2024Key Findings from the State of AppSec Report 2024

May 7, 2024Is AI Identifying Threats to Your Network?

May 14, 2024

Black Hat USA - August 3-8 - Learn More

August 3, 2024Cybersecurity's Hottest New Technologies: What You Need To Know

March 21, 2024